Aquantia AQtion AQC107 vs Intel X550-T2 - comparing 10G interfaces in iSCSI and CIFS file operations

The young company Aquantia was not known to anyone 2-3 years ago, and today its network controllers have become a passport to the world of 10-Gigabit networks for thousands of gamers around the world. This brand entered the market with an interesting idea: "not everyone has category 6 cables that can pull speeds of 10 Gigabits per second, so where expensive network cards can either 10G or 1G, our ones connect at both 2.5 G and 5G, and they are also cheap and almost do not heat up. Yes, we won't tell you that for a 2.5 G/5G connection, the opposite side of the wire must also have a chip that supports the NBASE-T standard, but who cares at all? We have a 10G NetWorker for$ 150 retail!".

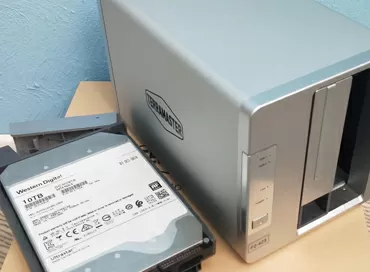

Of course, this product is designed primarily for enthusiastic gamers, but due to its low cost, Aquantia chips have become widespread in desktop NAS-s of the SmB segment, and in Dell Optiplex 7050 workstations, they were chosen by Nvidia, Asus, Gigabyte, ASRock and many others.

On its website, the company proudly writes that its network controllers are designed for Enterprise-segment client computers, that is, just for those workstations that need fast file sharing with the server. We recently tested an ASrock Taichi X470 Ultimate gaming motherboard that had an Aquantia AQC107 network controller installed on it, and we somehow bypassed the device's network performance. In this article, I correct this omission to show you whether Intel and Mellanox's position in the 10G network segment has faltered, or whether these giants can continue to sleep soundly.

Test bench configuration:

Server:

- Intel Xeon E5-2603 v4

- 32 Gb RAM ECC DDR4-2400 RDIMM

- Asrock Rack EPC612D4U-2T8R

- Vware ESXi 6.7

- Intel X540-T2 NIC in PCIe Passthrough

- Hard disk drives - 3 x 10k SAS Seagate Savvio 10K6. The stand is configured in such a way that they do not participate in testing and do not affect the speed.

- OSes:

- FreeNAS 11.2 for Samba access (16 Gb RAM для LARC), RAID Z1, LZ4 off, Dedupe off.

- Windows Server 2016 (8Gb iSCSI LUN на RAMDISK)

Client:

- AMD Ryzen 5 1600

- 16 Gb RAM DDR4 3000 MHz

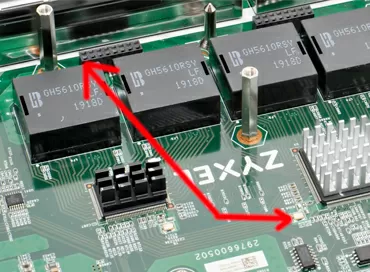

- ASRock X470 Taichi Ultimate

- Integrated Aquantia AQtion AQC 107

- Add-in board Intel X550-T2

- OSes:

- Windows 10 x64

- IOmeter for iSCSI

- CrystalDisk Benchmark for CIFS

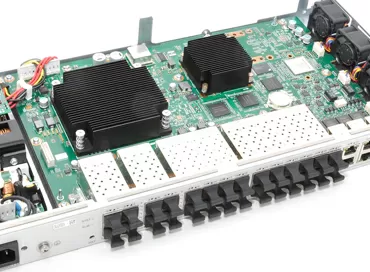

The server has a 10-Gigabit Intel X540-T2 card, which was presented to the guest operating system in Pci Passthrough mode for its native drivers. The client has 2 network cards: built-in Aquantia AQC107 and discrete Intel X550-T2. They took turns connecting the same cable to the same port of the server, whose software was configured so as not to use the disk subsystem, but to work from memory.

The Intel card is designed for virtualization. It supports the SR-IOV hardware resource sharing mode, has algorithms for reducing CPU load when used in virtual environments, and you can configure port pooling in 802.3 ad mode at the driver level. The Aquantia controller doesn't have any of this, not even the fancy game acceleration software that some premium motherboard manufacturers flaunt. Even hardware monitoring to see how the network controller is doing is not available in Windows drivers, but Intel does.

To tell you the truth, I expected that we would hit a Gigabyte per second and count the crumbs, looking at hundredths of a percent of the speed difference, but I was wrong. In modern server network cards, few people pay attention to the built-in Offload engines, considering that the processor can handle any iSCSI and NFS traffic, but the basic principle of unloading TCP packets themselves is applied in all boards, even in the cheapest integrated Intel i350. Neither Intel X550-T2 nor AQtion AQC-107 have any iSCSI or NFS offloading declared, and according to the specifications, the chips have the following offload algorithms:

- Aquantia AQC107: MSI, MSI-X, LSO, RSS, IPv4/IPv6 checksums

- Intel X550-T2: MSI, MSI-X, Tx/Rx, IPSec, LSO IPv4/IPv6 checksums.

But of course, no one is talking about the performance of the network card processor itself, and everything is very, very simple here: at speeds above 1 Gbit / s, the number of network packets that form file traffic already matters. The more packets are transmitted per second, the heavier the network card is, and the more powerful its processor should be. Part of the problem of network card power is solved by support for large Jumbo Frame frames, which is disabled by default on any network hardware for compatibility reasons. We increase the packet size and reduce the number of packets by the same amount of traffic, making it easier for the network interface to work and increasing its speed. If you do not configure the packet size, then in Windows, Linux, and FreeBSD it is 1500 bytes, and this is a huge load on the network card in file server mode.

Testing

The first test is with the default operating system settings (MTU=1500). We will use a simple and intuitive Crystaldisk Benchmark with a minimum text file of 50 MB, which will fit completely in the FreeNAS cache.

The difference in reading speed is huge. Due to a faster processor and good drivers, the Intel X550-T2 leaves no chance for a little-known integrated chip.

ISCSI tests confirm what you've seen : if you can't change the MTU parameter on your network for compatibility reasons, no Aquantia can replace the Intel parameter that has been tested for years. Expensive server X550-T2 gives almost three times the speed advantage, but in General, for 10G, these figures are not speed, but a shame. Therefore, we will increase the MTU value on all three network cards first to 9014 bytes. By the way, this value is the maximum that Intel drivers can set for Windows 10. Aquantia AQtion has a maximum of 16 KB here, so we'll set both values for It.

The situation is not just corrected, but allows the dark horse to get ahead on read operations with both MTU values.

In the iSCSI test, we were able to set the MTU only at 9014 due to the limitations of the Windows driver, and in this test, the Aquantia chip won again.

As for the CPU load, in both cases, the values of this parameter and the difference between the chips are not significant. Let me remind you that for the client, we used a desktop AMD Ryzen 5 1600 with 12 threads.

Price

On average, a 1-port network card on the Aquantia AQC107 chip costs $ 155, you can search For ASUS or GIgabyte boards created for gamers. Intel X550-T1 with one network port costs about$ 300, dual-port-around $ 330-350. On Ebay, you can buy X550 twice as cheap, but there is a great risk of running into a fake, and how to distinguish the original Intel network card from the one soldered by Chinese craftsmen, read our article.

Support from operating systems

Intel is doing much better with drivers. X550-T2 network cards are so expensive, in part, because they are supported out of the box by the VSphere 6.7 hypervisor, all Linux systems, and any other software. Aquantia requires manual installation of drivers that need to be downloaded from the manufacturer's website, and support for ESXi 6.7 is out of the question.

Conclusions

We have considered quite a typical situation in which the storage system runs in a virtual environment running FreeBSD or Windows and provides block or file access to the workstation. On the client side, Aquantia AQC107 wins in terms of speed and price, and the only thing that is required from the system administrator is to set the MTU to 9K or even more.

At the same time, for the server side, network cards such as Intel X550/X540/X710 retain two significant advantages that Aquantia does not have: support from any operating system and SR-IOV technology, which, in General, is not in demand anywhere.

In any case, after looking at the results of disk operations with a large queue of commands, all doubts disappear - AQTion AQC107 is the winner of our comparison.

P.S. I can't say why in our tests we didn't reach the maximum bandwidth of the 10-Gigabit channel. Theoretically, the "memory-network-memory" test should have gone above 1 GB / s, but we barely reached 700 megabytes per second. This may have something to do with Intel X540-T2 support on the server,or with the hypervisor's high overhead.

Michael Degtjarev (aka LIKE OFF)

29/09.2018